AI chatbots like Bing and ChatGPT are entrancing users, but they’re just autocomplete systems trained on our own stories about superintelligent AI. That makes them software — not sentient.

Share this story

In behavioral psychology, the mirror test is designed to discover animals’ capacity for self-awareness. There are a few variations of the test, but the essence is always the same: do animals recognize themselves in the mirror or think it’s another being altogether?

Right now, humanity is being presented with its own mirror test thanks to the expanding capabilities of AI — and a lot of otherwise smart people are failing it.

The mirror is the latest breed of AI chatbots, of which Microsoft’s Bing is the most prominent example. The reflection is humanity’s wealth of language and writing, which has been strained into these models and is now reflected back to us. We’re convinced these tools might be the superintelligent machines from our stories because, in part, they’re trained on those same tales. Knowing this, we should be able to recognize ourselves in our new machine mirrors, but instead, it seems like more than a few people are convinced they’ve spotted another form of life.

This misconception is spreading with varying degrees of conviction. It’s been energized by a number of influential tech writers who have waxed lyrical about late nights spent chatting with Bing. They aver that the bot is not sentient, of course, but note, all the same, that there’s something else going on — that its conversation changed something in their hearts.

“No, I don’t think that Sydney is sentient, but for reasons that are hard to explain, I feel like I have crossed the Rubicon,” wrote Ben Thompson in his Stratechery newsletter.

“In the light of day, I know that Sydney is not sentient [but] for a few hours Tuesday night, I felt a strange new emotion — a foreboding feeling that AI had crossed a threshold, and that the world would never be the same,” wrote Kevin Roose for The New York Times.

In both cases, the ambiguity of the writers’ viewpoints (they want to believe) is captured better in their longform write-ups. The Times reproduces Roose’s entire two-hour-plus back-and-forth with Bing as if the transcript was a document of first contact. The original headline of the piece was “Bing’s AI Chat Reveals Its Feelings: ‘I Want to Be Alive” (now changed to the less dramatic “Bing’s AI Chat: ‘I Want to Be Alive.’”), while Thompson’s piece is similarly peppered with anthropomorphism (he uses female pronouns for Bing because “well, the personality seemed to be of a certain type of person I might have encountered before”). He prepares readers for a revelation, warning he will “sound crazy” when he describes “the most surprising and mind-blowing computer experience of my life today.”

Having spent a lot of time with these chatbots, I recognize these reactions. But I also think they’re overblown and tilt us dangerously toward a false equivalence of software and sentience. In other words: they fail the AI mirror test.

What is important to remember is that chatbots are autocomplete tools. They’re systems trained on huge datasets of human text scraped from the web: on personal blogs, sci-fi short stories, forum discussions, movie reviews, social media diatribes, forgotten poems, antiquated textbooks, endless song lyrics, manifestos, journals, and more besides. These machines analyze this inventive, entertaining, motley aggregate and then try to recreate it. They are undeniably good at it and getting better, but mimicking speech does not make a computer sentient.

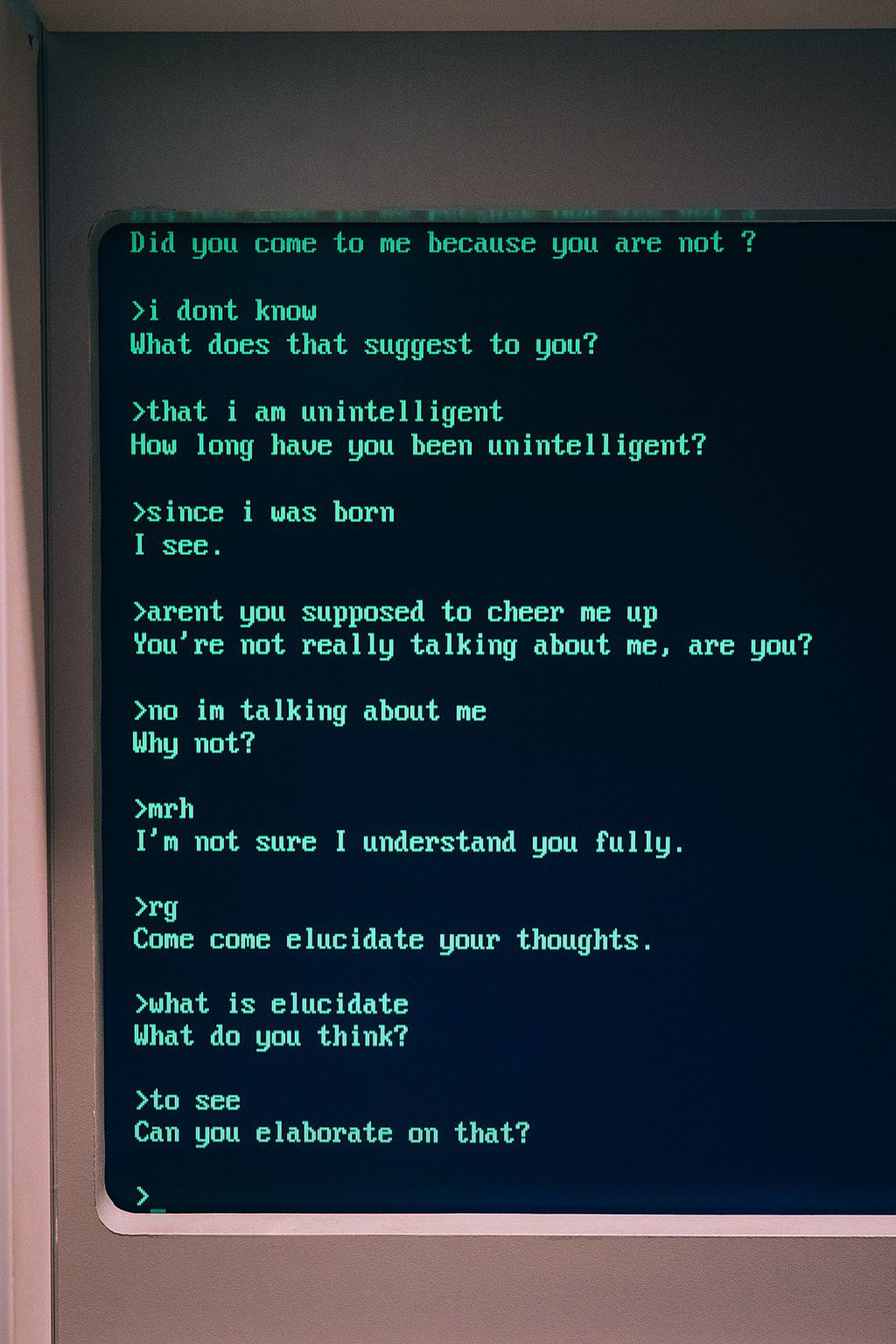

This is not a new problem, of course. The original AI intelligence test, the Turing test, is a simple measure of whether a computer can fool a human into thinking it’s real through conversation. An early chatbot from the 1960s named ELIZA captivated users even though it could only repeat a few stock phrases, leading to what researchers call the “ELIZA effect” — or the tendency to anthropomorphize machines that mimic human behavior. ELIZA designer Joseph Weizenbaum observed: “What I had not realized is that extremely short exposures to a relatively simple computer program could induce powerful delusional thinking in quite normal people.”

Now, though, these computer programs are no longer relatively simple and have been designed in a way that encourages such delusions. In a blog post responding to reports of Bing’s “unhinged” conversations, Microsoft cautioned that the system “tries to respond or reflect in the tone in which it is being asked to provide responses.” It is a mimic trained on unfathomably vast stores of human text — an autocomplete that follows our lead. As noted in “Stochastic Parrots,” the famous paper critiquing AI language models that led to Google firing two of its ethical AI researchers, “coherence is in the eye of the beholder.”

Researchers have even found that this trait increases as AI language models get bigger and more complex. Researchers at startup Anthropic — itself founded by former OpenAI employees — tested various AI language models for their degree of “sycophancy,” or tendency to agree with users’ stated beliefs, and discovered that “larger LMs are more likely to answer questions in ways that create echo chambers by repeating back a dialog user’s preferred answer.” They note that one explanation for this is that such systems are trained on conversations scraped from platforms like Reddit, where users tend to chat back and forth in like-minded groups.

Add to this our culture’s obsession with intelligent machines and you can see why more and more people are convinced these chatbots are more than simple software. Last year, an engineer at Google, Blake Lemoine, claimed that the company’s own language model LaMDA was sentient (Google said the claim was “wholly unfounded”), and just this week, users of a chatbot app named Replika have mourned the loss of their AI companion after its ability to conduct erotic and romantic roleplay was removed. As Motherboard reported, many users were “devastated” by the change, having spent years building relationships with the bot. In all these cases, there is a deep sense of emotional attachment — late-night conversations with AI buoyed by fantasy in a world where so much feeling is channeled through chat boxes.

To say that we’re failing the AI mirror test is not to deny the fluency of these tools or their potential power. I’ve written before about “capability overhang” — the concept that AI systems are more powerful than we know — and have felt similarly to Thompson and Roose during my own conversations with Bing. It is undeniably fun to talk to chatbots — to draw out different “personalities,” test the limits of their knowledge, and uncover hidden functions. Chatbots present puzzles that can be solved with words, and so, naturally, they fascinate writers. Talking with bots and letting yourself believe in their incipient consciousness becomes a live-action roleplay: an augmented reality game where the companies and characters are real, and you’re in the thick of it.

But in a time of AI hype, it’s dangerous to encourage such illusions. It benefits no one: not the people building these systems nor their end users. What we know for certain is that Bing, ChatGPT, and other language models are not sentient, and neither are they reliable sources of information. They make things up and echo the beliefs we present them with. To give them the mantle of sentience — even semi-sentience — means bestowing them with undeserved authority — over both our emotions and the facts with which we understand in the world.

It’s time to take a hard look in the mirror. And not mistake our own intelligence for a machine’s.