In a briefing on Thursday afternoon, Apple confirmed previously reported plans to deploy new technology within iOS, macOS, watchOS, and iMessage that will detect potential child abuse imagery, but clarified crucial details from the ongoing project. For devices in the US, new versions of iOS and iPadOS rolling out this fall have “new applications of cryptography to help limit the spread of CSAM [child sexual abuse material] online, while designing for user privacy.”

The project is also detailed in a new “Child Safety” page on Apple’s website. The most invasive and potentially controversial implementation is the system that performs on-device scanning before an image is backed up in iCloud. From the description, scanning does not occur until a file is getting backed up to iCloud, and Apple only receives data about a match if the cryptographic vouchers (uploaded to iCloud along with the image) for a particular account meet a threshold of matching known CSAM.

For years, Apple has used hash systems to scan for child abuse imagery sent over email, in line with similar systems at Gmail and other cloud email providers. The program announced today will apply the same scans to user images stored in iCloud Photos, even if the images are never sent to another user or otherwise shared.

In a PDF provided along with the briefing, Apple justified its moves for image scanning by describing several restrictions that are included to protect privacy:

Apple does not learn anything about images that do not match the known CSAM

database.

Apple can’t access metadata or visual derivatives for matched CSAM images until a

threshold of matches is exceeded for an iCloud Photos account.

The risk of the system incorrectly flagging an account is extremely low. In addition,

Apple manually reviews all reports made to NCMEC to ensure reporting accuracy.

Users can’t access or view the database of known CSAM images.

Users can’t identify which images were flagged as CSAM by the system

The new details build on concerns leaked earlier this week, but also add a number of safeguards that should guard against the privacy risks of such a system. In particular, the threshold system ensures that lone errors will not generate alerts, allowing apple to target an error rate of one false alert per trillion users per year. The hashing system is also limited to material flagged by the National Center for Missing and Exploited Children (NCMEC), and images uploaded to iCloud Photos. Once an alert is generated, it is reviewed by Apple and NCMEC before alerting law enforcement, providing an additional safeguard against the system being used to detect non-CSAM content.

Apple commissioned technical assessments of the system from three independent cryptographers (PDFs 1, 2, and 3), who found it to be mathematically robust. “In my judgement this system will likely significantly increase the likelihood that people who own or traffic in such pictures (harmful users) are found; this should help protect children,” said professor David Forsyth, chair of computer science at University of Illinois, in one of the assessments. “The accuracy of the matching system, combined with the threshold, makes it very unlikely that pictures that are not known CSAM pictures will be revealed.”

However, Apple said other child safety groups were likely to be added as hash sources as the program expands, and the company did not commit to making the list of partners publicly available going forward. That is likely to heighten anxieties about how the system might be exploited by the Chinese government, which has long sought greater access to iPhone user data within the country.

:no_upscale()/cdn.vox-cdn.com/uploads/chorus_asset/file/22764418/child_safety__evd7tla79kqe_large.jpg)

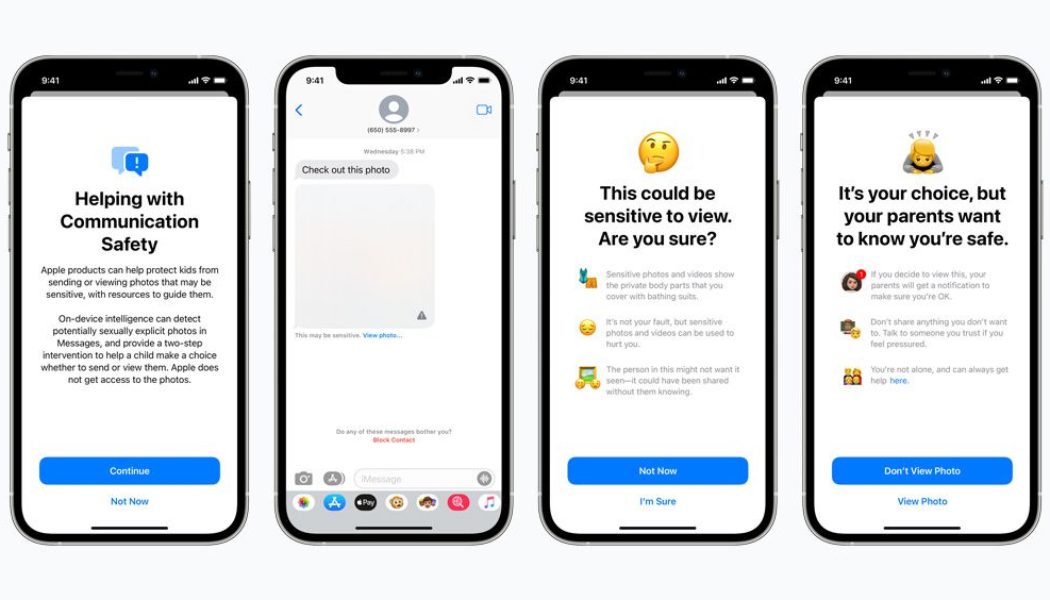

Alongside the new measures in iCloud Photos, Apple added two additional systems to protect young iPhone owners at risk of child abuse. The Messages app already did on-device scanning of image attachments for children’s accounts to detect content that’s potentially sexually explicit. Once detected, the content is blurred and a warning appears. A new setting that parents can enable on their family iCloud accounts will trigger a message telling the child that if they view (incoming) or send (outgoing) the detected image, their parents will get a message about it.

Apple is also updating how Siri and the Search app respond to queries about child abuse imagery. Under the new system, the apps “will explain to users that interest in this topic is harmful and problematic, and provide resources from partners to get help with this issue.”